Public Member Functions | |

| BatchedNMSPlugin (NMSParameters param) | |

| BatchedNMSPlugin (const void *data, size_t length) | |

| ~BatchedNMSPlugin () override=default | |

| const char * | getPluginType () const override |

| Return the plugin type. More... | |

| const char * | getPluginVersion () const override |

| Return the plugin version. More... | |

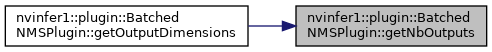

| int | getNbOutputs () const override |

| Get the number of outputs from the layer. More... | |

| Dims | getOutputDimensions (int index, const Dims *inputs, int nbInputDims) override |

| bool | supportsFormat (DataType type, PluginFormat format) const override |

| Check format support. More... | |

| size_t | getWorkspaceSize (int maxBatchSize) const override |

| int | enqueue (int batchSize, const void *const *inputs, void **outputs, void *workspace, cudaStream_t stream) override |

| int | initialize () override |

| Initialize the layer for execution. More... | |

| void | terminate () override |

| Release resources acquired during plugin layer initialization. More... | |

| size_t | getSerializationSize () const override |

| Find the size of the serialization buffer required. More... | |

| void | serialize (void *buffer) const override |

| Serialize the layer. More... | |

| void | destroy () override |

| Destroy the plugin object. More... | |

| void | setPluginNamespace (const char *libNamespace) override |

| Set the namespace that this plugin object belongs to. More... | |

| const char * | getPluginNamespace () const override |

| Return the namespace of the plugin object. More... | |

| void | setClipParam (bool clip) |

| nvinfer1::DataType | getOutputDataType (int index, const nvinfer1::DataType *inputType, int nbInputs) const override |

| bool | isOutputBroadcastAcrossBatch (int outputIndex, const bool *inputIsBroadcasted, int nbInputs) const override |

| bool | canBroadcastInputAcrossBatch (int inputIndex) const override |

| void | configurePlugin (const Dims *inputDims, int nbInputs, const Dims *outputDims, int nbOutputs, const DataType *inputTypes, const DataType *outputTypes, const bool *inputIsBroadcast, const bool *outputIsBroadcast, PluginFormat floatFormat, int maxBatchSize) override |

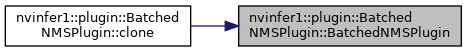

| IPluginV2Ext * | clone () const override |

| Clone the plugin object. More... | |

| virtual nvinfer1::DataType | getOutputDataType (int32_t index, const nvinfer1::DataType *inputTypes, int32_t nbInputs) const =0 |

| Return the DataType of the plugin output at the requested index. More... | |

| virtual bool | isOutputBroadcastAcrossBatch (int32_t outputIndex, const bool *inputIsBroadcasted, int32_t nbInputs) const =0 |

| Return true if output tensor is broadcast across a batch. More... | |

| virtual bool | canBroadcastInputAcrossBatch (int32_t inputIndex) const =0 |

| Return true if plugin can use input that is broadcast across batch without replication. More... | |

| virtual void | configurePlugin (const Dims *inputDims, int32_t nbInputs, const Dims *outputDims, int32_t nbOutputs, const DataType *inputTypes, const DataType *outputTypes, const bool *inputIsBroadcast, const bool *outputIsBroadcast, PluginFormat floatFormat, int32_t maxBatchSize)=0 |

| Configure the layer with input and output data types. More... | |

| virtual void | attachToContext (cudnnContext *, cublasContext *, IGpuAllocator *) |

| Attach the plugin object to an execution context and grant the plugin the access to some context resource. More... | |

| virtual void | detachFromContext () |

| Detach the plugin object from its execution context. More... | |

| virtual Dims | getOutputDimensions (int32_t index, const Dims *inputs, int32_t nbInputDims)=0 |

| Get the dimension of an output tensor. More... | |

| virtual size_t | getWorkspaceSize (int32_t maxBatchSize) const =0 |

| Find the workspace size required by the layer. More... | |

| virtual int32_t | enqueue (int32_t batchSize, const void *const *inputs, void **outputs, void *workspace, cudaStream_t stream)=0 |

| Execute the layer. More... | |

Protected Member Functions | |

| int32_t | getTensorRTVersion () const |

| Return the API version with which this plugin was built. More... | |

| void | configureWithFormat (const Dims *, int32_t, const Dims *, int32_t, DataType, PluginFormat, int32_t) |

| Derived classes should not implement this. More... | |

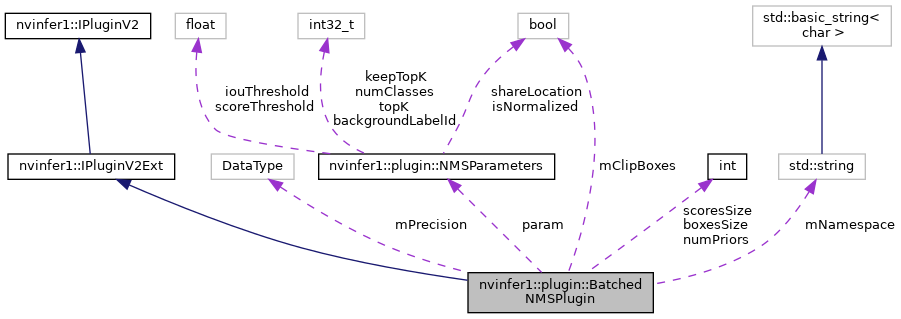

Private Attributes | |

| NMSParameters | param {} |

| int | boxesSize {} |

| int | scoresSize {} |

| int | numPriors {} |

| std::string | mNamespace |

| bool | mClipBoxes {} |

| DataType | mPrecision |

| BatchedNMSPlugin::BatchedNMSPlugin | ( | NMSParameters | param | ) |

| BatchedNMSPlugin::BatchedNMSPlugin | ( | const void * | data, |

| size_t | length | ||

| ) |

|

overridedefault |

|

overridevirtual |

Return the plugin type.

Should match the plugin name returned by the corresponding plugin creator

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Return the plugin version.

Should match the plugin version returned by the corresponding plugin creator

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Get the number of outputs from the layer.

This function is called by the implementations of INetworkDefinition and IBuilder. In particular, it is called prior to any call to initialize().

Implements nvinfer1::IPluginV2.

|

override |

|

overridevirtual |

Check format support.

| type | DataType requested. |

| format | PluginFormat requested. |

This function is called by the implementations of INetworkDefinition, IBuilder, and safe::ICudaEngine/ICudaEngine. In particular, it is called when creating an engine and when deserializing an engine.

Implements nvinfer1::IPluginV2.

|

override |

|

override |

|

overridevirtual |

Initialize the layer for execution.

This is called when the engine is created.

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Release resources acquired during plugin layer initialization.

This is called when the engine is destroyed.

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Find the size of the serialization buffer required.

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Serialize the layer.

| buffer | A pointer to a buffer to serialize data. Size of buffer must be equal to value returned by getSerializationSize. |

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Destroy the plugin object.

This will be called when the network, builder or engine is destroyed.

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Set the namespace that this plugin object belongs to.

Ideally, all plugin objects from the same plugin library should have the same namespace.

Implements nvinfer1::IPluginV2.

|

overridevirtual |

Return the namespace of the plugin object.

Implements nvinfer1::IPluginV2.

| void BatchedNMSPlugin::setClipParam | ( | bool | clip | ) |

|

override |

|

override |

|

override |

|

override |

|

overridevirtual |

Clone the plugin object.

This copies over internal plugin parameters as well and returns a new plugin object with these parameters. If the source plugin is pre-configured with configurePlugin(), the returned object should also be pre-configured. The returned object should allow attachToContext() with a new execution context. Cloned plugin objects can share the same per-engine immutable resource (e.g. weights) with the source object (e.g. via ref-counting) to avoid duplication.

Implements nvinfer1::IPluginV2Ext.

|

pure virtualinherited |

Return the DataType of the plugin output at the requested index.

The default behavior should be to return the type of the first input, or DataType::kFLOAT if the layer has no inputs. The returned data type must have a format that is supported by the plugin.

|

pure virtualinherited |

Return true if output tensor is broadcast across a batch.

| outputIndex | The index of the output |

| inputIsBroadcasted | The ith element is true if the tensor for the ith input is broadcast across a batch. |

| nbInputs | The number of inputs |

The values in inputIsBroadcasted refer to broadcasting at the semantic level, i.e. are unaffected by whether method canBroadcastInputAcrossBatch requests physical replication of the values.

|

pure virtualinherited |

Return true if plugin can use input that is broadcast across batch without replication.

| inputIndex | Index of input that could be broadcast. |

For each input whose tensor is semantically broadcast across a batch, TensorRT calls this method before calling configurePlugin. If canBroadcastInputAcrossBatch returns true, TensorRT will not replicate the input tensor; i.e., there will be a single copy that the plugin should share across the batch. If it returns false, TensorRT will replicate the input tensor so that it appears like a non-broadcasted tensor.

This method is called only for inputs that can be broadcast.

|

pure virtualinherited |

Configure the layer with input and output data types.

This function is called by the builder prior to initialize(). It provides an opportunity for the layer to make algorithm choices on the basis of its weights, dimensions, data types and maximum batch size.

| inputDims | The input tensor dimensions. |

| nbInputs | The number of inputs. |

| outputDims | The output tensor dimensions. |

| nbOutputs | The number of outputs. |

| inputTypes | The data types selected for the plugin inputs. |

| outputTypes | The data types selected for the plugin outputs. |

| inputIsBroadcast | True for each input that the plugin must broadcast across the batch. |

| outputIsBroadcast | True for each output that TensorRT will broadcast across the batch. |

| floatFormat | The format selected for the engine for the floating point inputs/outputs. |

| maxBatchSize | The maximum batch size. |

The dimensions passed here do not include the outermost batch size (i.e. for 2-D image networks, they will be 3-dimensional CHW dimensions). When inputIsBroadcast or outputIsBroadcast is true, the outermost batch size for that input or output should be treated as if it is one. inputIsBroadcast[i] is true only if the input is semantically broadcast across the batch and canBroadcastInputAcrossBatch(i) returned true. outputIsBroadcast[i] is true only if isOutputBroadcastAcrossBatch(i) returned true.

|

inlinevirtualinherited |

Attach the plugin object to an execution context and grant the plugin the access to some context resource.

| cudnn | The cudnn context handle of the execution context |

| cublas | The cublas context handle of the execution context |

| allocator | The allocator used by the execution context |

This function is called automatically for each plugin when a new execution context is created. If the plugin needs per-context resource, it can be allocated here. The plugin can also get context-owned CUDNN and CUBLAS context here.

Reimplemented in nvinfer1::plugin::ProposalPlugin, nvinfer1::plugin::CropAndResizePlugin, nvinfer1::plugin::SpecialSlice, nvinfer1::plugin::FlattenConcat, nvinfer1::plugin::ProposalLayer, nvinfer1::plugin::GenerateDetection, nvinfer1::plugin::MultilevelCropAndResize, nvinfer1::plugin::PyramidROIAlign, nvinfer1::plugin::DetectionLayer, nvinfer1::plugin::MultilevelProposeROI, nvinfer1::plugin::Normalize, nvinfer1::plugin::ResizeNearest, nvinfer1::plugin::DetectionOutput, nvinfer1::plugin::RPROIPlugin, nvinfer1::plugin::PriorBox, nvinfer1::plugin::Region, nvinfer1::plugin::Reorg, nvinfer1::plugin::GridAnchorGenerator, nvinfer1::plugin::GroupNormalizationPlugin, nvinfer1::plugin::InstanceNormalizationPlugin, and nvinfer1::plugin::SplitPlugin.

|

inlinevirtualinherited |

Detach the plugin object from its execution context.

This function is called automatically for each plugin when a execution context is destroyed. If the plugin owns per-context resource, it can be released here.

Reimplemented in nvinfer1::plugin::SplitPlugin, nvinfer1::plugin::ProposalPlugin, nvinfer1::plugin::CropAndResizePlugin, nvinfer1::plugin::SpecialSlice, nvinfer1::plugin::ProposalLayer, nvinfer1::plugin::GenerateDetection, nvinfer1::plugin::MultilevelCropAndResize, nvinfer1::plugin::PyramidROIAlign, nvinfer1::plugin::DetectionLayer, nvinfer1::plugin::MultilevelProposeROI, nvinfer1::plugin::Normalize, nvinfer1::plugin::ResizeNearest, nvinfer1::plugin::DetectionOutput, nvinfer1::plugin::RPROIPlugin, nvinfer1::plugin::GroupNormalizationPlugin, nvinfer1::plugin::PriorBox, nvinfer1::plugin::Region, nvinfer1::plugin::InstanceNormalizationPlugin, nvinfer1::plugin::Reorg, nvinfer1::plugin::GridAnchorGenerator, and nvinfer1::plugin::FlattenConcat.

|

inlineprotectedvirtualinherited |

Return the API version with which this plugin was built.

The upper byte reserved by TensorRT and is used to differentiate this from IPlguinV2.

Do not override this method as it is used by the TensorRT library to maintain backwards-compatibility with plugins.

Reimplemented from nvinfer1::IPluginV2.

|

inlineprotectedvirtualinherited |

Derived classes should not implement this.

In a C++11 API it would be override final.

Implements nvinfer1::IPluginV2.

|

pure virtualinherited |

Get the dimension of an output tensor.

| index | The index of the output tensor. |

| inputs | The input tensors. |

| nbInputDims | The number of input tensors. |

This function is called by the implementations of INetworkDefinition and IBuilder. In particular, it is called prior to any call to initialize().

|

pure virtualinherited |

Find the workspace size required by the layer.

This function is called during engine startup, after initialize(). The workspace size returned should be sufficient for any batch size up to the maximum.

|

pure virtualinherited |

Execute the layer.

| batchSize | The number of inputs in the batch. |

| inputs | The memory for the input tensors. |

| outputs | The memory for the output tensors. |

| workspace | Workspace for execution. |

| stream | The stream in which to execute the kernels. |

|

private |

|

private |

|

private |

|

private |

|

private |

|

private |

|

private |